As the need for data storage drives the growth of data centers, colocation facilities are increasingly important to enterprises. A colocation data center brings many advantages to an enterprise data center, such as carriers helping enterprises manage their IT infrastructure that reduces the cost for management. There are two types of hosting carriers: carrier-neutral and carrier-specific. In this article, we will discuss the differentiation of them.

Carrier Neutral and Carrier Specific Data Center: What Are They?

Accompanied by the accelerated growth of the Internet, the exponential growth of data has led to a surge in the number of data centers to meet the needs of companies of all sizes and market segments. Two types of carriers that offer managed services have emerged on the market.

Carrier-neutral data centers allow access and interconnection of multiple different carriers while the carriers can find solutions that meet the specific needs of an enterprise’s business. Carrier-specific data centers, however, are monolithic, supporting only one carrier that controls all access to corporate data. At present, most enterprises choose carrier-neutral data centers to support their business development and avoid some unplanned accidents.

There is an example, in 2021, about 1/3 of the cloud infrastructure in AWS was overwhelmed and down for 9 hours. This not only affected millions of websites, but also countless other devices running on AWS. A week later, AWS was down again for about an hour, bringing down the Playstation network, Zoom, and Salesforce, among others. The third downtime of AWS also impacted Internet giants such as Slack, Asana, Hulu, and Imgur to a certain extent. 3 outages of cloud infrastructure in one month took a beyond measure cost to AWS, which also proved the fragility of cloud dependence.

In the above example, we can know that the management of the data center by the enterprise will affect the business development due to some unplanned accidents, which is a huge loss for the enterprise. To lower the risks caused by using a single carrier, enterprises need to choose a carrier-neutral data center and adjust the system architecture to protect their data center.

Why Should Enterprises Choose Carrier Neutral Data Center?

Carrier-neutral data centers are data centers operated by third-party colocation providers, but these third parties are rarely involved in providing Internet access services. Hence, the existence of carrier-neutral data centers enhances the diversity of market competition and provides enterprises with more beneficial options.

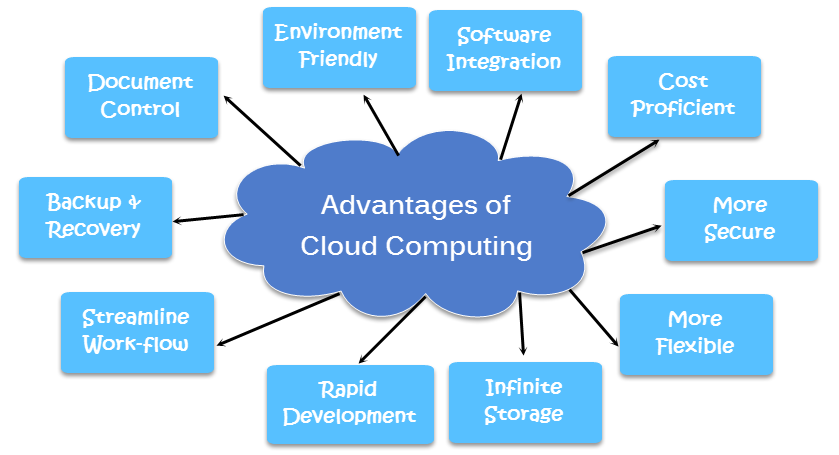

Another colocation advantage of a carrier-neutral data center is the ability to change internet providers as needed, saving the labor cost of physically moving servers elsewhere. We have summarized several main advantages of a carrier-neutral data center as follows.

Redundancy

A carrier-neutral colocation data center is independent of the network operators and not owned by a single ISP. Out of this advantage, it offers enterprises multiple connectivity options, creating a fully redundant infrastructure. If one of the carriers loses power, the carrier-neutral data center can instantly switch servers to another online carrier. This ensures that the entire infrastructure is running and always online. On the network connection, a cross-connect is used to connect the ISP or telecom company directly to the customer’s sub-server to obtain bandwidth from the source. This can effectively avoid network switching to increase additional delay and ensure network performance.

Options and Flexibility

Flexibility is a key factor and advantage for carrier-neutral data center providers. For one thing, the carrier neutral model can increase or decrease the network transmission capacity through the operation of network transmission. And as the business continues to grow, enterprises need colocation data center providers that can provide scalability and flexibility. For another thing, carrier-neutral facilities can provide additional benefits to their customers, such as offering enterprise DR options, interconnect, and MSP services. Whether your business is large or small, a carrier-neutral data center provider may be the best choice for you.

Cost-effectiveness

First, colocation data center solutions can provide a high level of control and scalability, expanding opportunity to storage, which can support business growth and save some expenses. Additionally, it also lowers physical transport costs for enterprises. Second, with all operators in the market competing for the best price and maximum connectivity, a net neutral data center has a cost advantage over a single network facility. What’s more, since freedom of use to any carrier in a carrier-neutral data center, enterprises can choose the best cost-benefit ratio for their needs.

Reliability

Carrier-neutral data centers also boast reliability. One of the most important aspects of a data center is the ability to have 100% uptime. Carrier-neutral data center providers can provide users with ISP redundancy that a carrier-specific data center cannot. Having multiple ISPs at the same time gives better security for all clients. Even if one carrier fails, another carrier may keep the system running. At the same time, the data center service provider provides 24/7 security including all the details and uses advanced technology to ensure the security of login access at all access points to ensure that customer data is safe. Also, the multi-layered protection of the physical security cabinet ensures the safety of data transmission.

Summary

While many enterprises need to determine the best option for their company’s specific business needs, by comparing both carrier-neutral and carrier-specific, choosing a network carrier neutral data center service provider is a better option for today’s cloud-based business customers. Several advantages, such as maximizing total cost, lower network latency, and better network coverage, are of working with a carrier-neutral managed service provider. With no downtime and constant concerns about equipment performance, IT decision-makers for enterprise clients have more time to focus on the more valuable areas that drive continued business growth and success.

Article Source: Carrier Neutral vs. Carrier Specific: Which to Choose?

Related Articles:

On-Premises vs. Cloud Data Center, Which Is Right for Your Business?