In 1999, with the rapid development of CPU performance, the existing defective I/O systems had become a bottleneck restricting server performance. The telecommunication industry had urgent need for a powerful next generation I/O standard and technology to cater for the high speed communication network. Under this circumstance InfiniBand originated. Accordingly InfiniBand switch combined high-speed fiber switch with InfiniBand technology was invented to achieve node to node communication in IB networking. This post will introduce what is InfiniBand, what is InfiniBand switch and how to bridge InfiniBand to Ethernet.

What Is InfiniBand?

It was until 2005 that InfiniBand Architecture (IBA) has been widely used in clustered supercomputers. And ever since more and more telecom. giants are joining to the camp. Now InfiniBand has become one of the mainstream high performance computer (HPC) interconnect technologies in HPC, enterprise data centers and cloud computing environments. InfiniBand, infinite bandwidth, as the name reveals, is a high-performance computing networking communication standard. It features high throughput, low latency and high system scalability. InfiniBand as a cutting-edge technology, is ideal for communications between servers, server and storage, server and LAN/WAN/Internet. InfiniBand architecture is to use this technology to achieve multiple link networking for data follow between processors and I/O devices with non-blocking bandwidth.

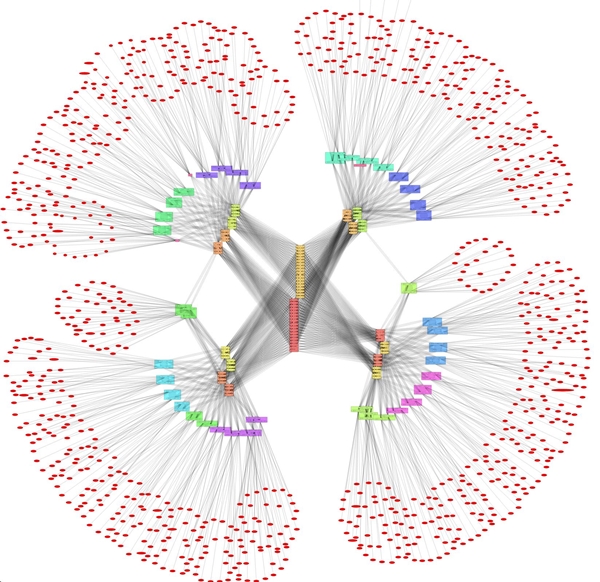

Figure 1: InfiniBand topology HPC cluster – an InfiniBand switch is integrated in each of the classis.

What Is InfiniBand Switch?

InfiniBand switch is also called as IB switch. Similar to PoE switch, SDN switch and NVGRE/VXLAN switch, IB switch is to add InfiniBand capability to network switch hardware. In the market Mellanox InfiniBand switch, Intel and Oracle InfiniteBand switch are three name-brand leading IB switches. InfiniBand switch price also varies from vendors and switch configurations. IB switch ports comes with different numbers, connector types and IB types. For instance, the leading IB switch vendor Mellanox manufactures 8 to 648-port QSFP/QSFP28 FDR/EDR InfiniBand switches. In a common 4 × links, FDR and EDR InfiniBand support respectively 56Gb/s and 100Gb/s. In addition to the popular FDR 56Gb/s and EDR 100Gb/s IinfiniBand, you can go for HDR 200G switch for higher speed and SDR 10GbE switch for lower speed. Other IB types available are DDR 20G, QDR 40G and FDR10 40G.

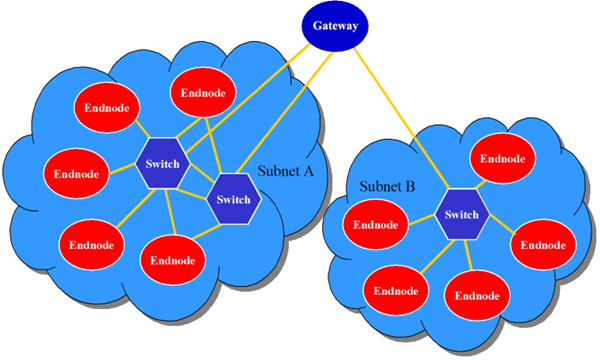

Figure 2: InfiniBand switches in a basic InfiniBand Architecture by Mellanox to ensure higher bandwidth, lower latency, and enhanced scalability.

How to Bridge InfiniBand to Ethernet?

As Ethernet and InfiniBand are two different network standards, one question is of great concern – how to bridge InfiniBand to Ethernet? In fact many modern InfiniBand switches have built-in Ethernet ports and Ethernet gateway to improve network environment adaptability. But for cases where IB ports are only on InfiniBand switch, how to connect the layer 2 InfiniBand host to layer 1 multiple gigabit Etherne switches? You may need NICs such as Infiniband card/Ethernet converged network adapters (CNAs) to bridge the InfinBand over Ethernet.

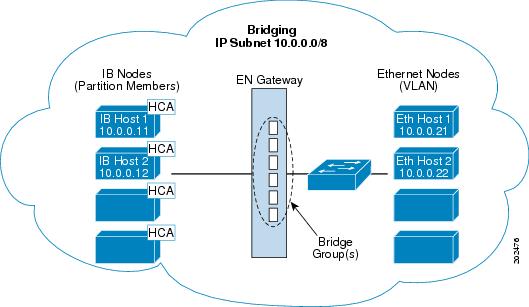

Figure 3: An illustration of Ethernet gateway Bridge-group bridges InfiniBand to Ethernet by Cisco.

Or you can buy Mellanox InfiniBand switch series based on ConnectX series network card and SwitchX switch, which supports virtual protocol interconnection (VPI) between InfiniBand and Ethernet. As thus it enables link protocol display or automatic adaptation and one physical Mellanox IB switch can implement various technical supports. The VPI supports 3 modes – the whole machine VPI, port VPI and VPI bridging. The whole VPI enables all ports of the InfiniBand switch run in InfiniBand or Ethernet mode. The port VPI commands some ports of the switch run in IB network and some ports run in Ethernet mode. The VPI bridging mode implements InfiniBand bridging to Ethernet.

Conclusion

InfiniBnad technology simplifies and accelerates link aggreagation between servers and supports server connectivity to remote storage and network devices. InfiniBand switch combines IB technology with fiber switch hardware. It achieves high capacity, low latency and excellent scalability for HPC, enterprise data centers and cloud computing environments. How to bridge InfiniBand to Ethernet in a topology built with InfiniBand switch and Ethernet switch? Devices like channel adapter (CNA), InfiniBand router/Ethernet gateway, InfiniBand connector and InfiniBand cable may be required. To ensure flexible bridging, go for IB switch with optional Ethernet ports or Mellanox InfiniBand switch series with VPI functionality. Of course such InfiniBand switch price can be rather exorbitant, but its advanced features make it worthy of that.